Reading time:

2 minutes

Word count:

235

In this episode E2, I take a look at project inception from a very high level of flight, the so-called golden eagle level. In my career, especially as contractor, the project inception has already started long ago on the day I join the project. A contractor job is to deliver the functionality, “hit the ground […]

Reading time:

2 minutes

Word count:

350

PPJWT Countdown Day 1 – “Yo Drive Control” Release day is nearly upon us. This is a short extract of my 2020 / 2021 video online course. It’s very nearly is there, I promise. To be really honest, this video course is already three months late. I am disappointed by timekeeping, but I realise this […]

Reading time:

1 minute

Word count:

135

How to improve your performance in the tech industry? https://vimeo.com/techworkperf The best laid plans The mouse gets the cheese Interview preparation: your confidence boost First impressions Prep work How to respond to questions? How to deal with panels? Your chance to shine, how to ask questions? How to deal with pairing tests? How to deal […]

Reading time:

4 minutes

Word count:

858

After so long, such a long time, I’m excited to announcement the availability of my second book Digital Java EE 7 Web Application Development. It is available from Packt Publishing. These are the chapters and appendices: Chapter 1 Java EE 7 Platform for Digital Chapter 2 Java Server Faces Introduction Chapter 3 Building […]

Reading time:

2 minutes

Word count:

425

I never give two references up front in an interview process. It seems to me that some people [recruiters, talent acquisition hunters and researchers] are just chancing their arm to get into my business social network or pump me for information. If you know Kevin Mitnick then you probably know about Social Engineering too. This […]

Reading time:

10 minutes

Word count:

2156

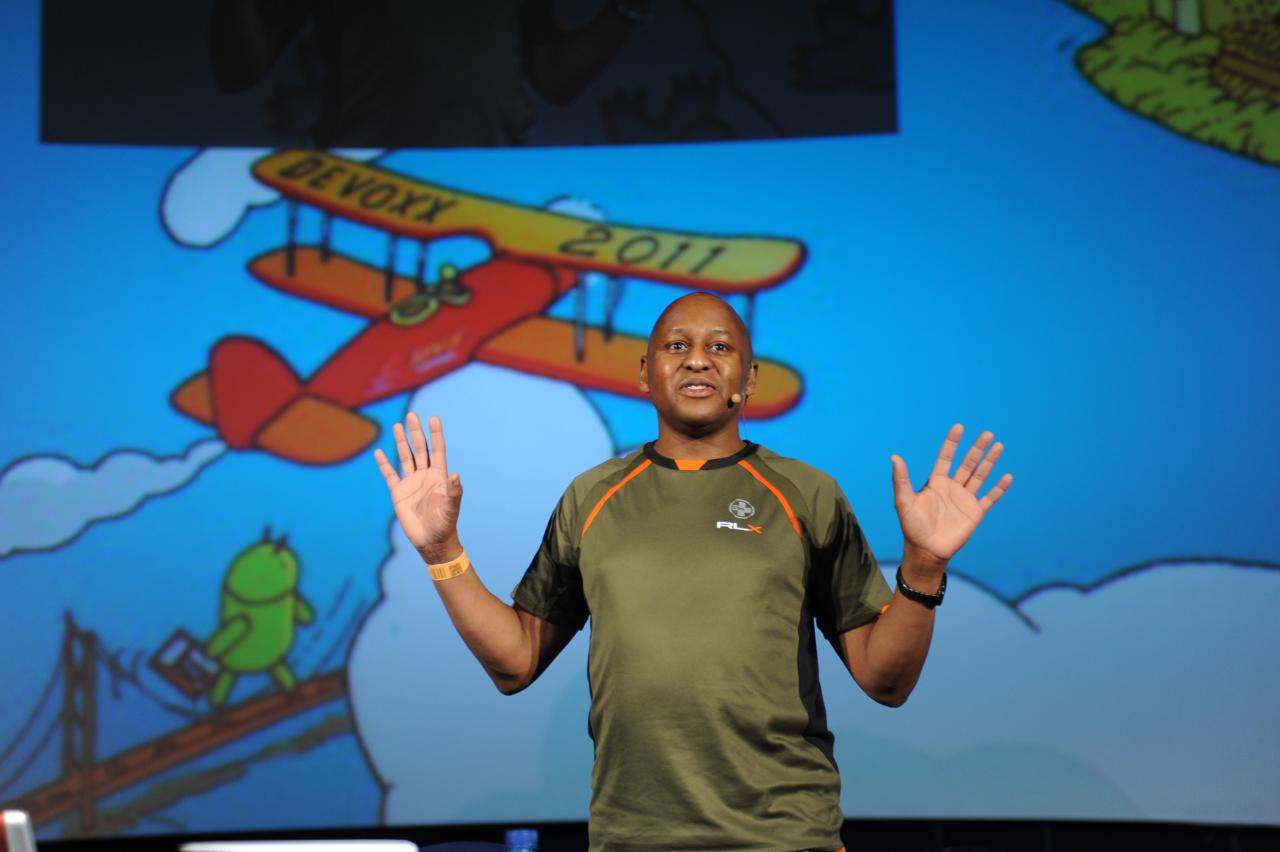

I was fortunate to attend the QCon London 2012 conference, this year. Firstly, I was delighted to be invited as a speaker and then when that did not pan out, secondly, they still let me in as VIP guest. I would like to special thanks the QCon / Trifork staff for the awesome gift. […]

Reading time:

1 minute

Word count:

38

Somehow my machine lost a blog entry on the Functional Exchange. Anyway, better late than never ever. Audioboos Listen! Listen! Listen! Pics Simon Peyton Jones Sorry. My time is limited to recover the rest of this blog entry.

Hey all! Thanks for visiting.

I provide fringe benefits to interested readers: checkout

consultancy,

training or

mentorship

Please make enquiries

by email

or

call

+44 (0)7397 067 658.

Due to the

Off-Payroll Working

plan for the UK government, I am enforcing stricter measures on contracts.

All potential public sector GOV.UK contracts engagements must be approved by QDOS

and/or SJD Accounting.

Please enquire for further information.